-

Lab Methodology

Lab Manual

IMPORTANT: The methodology, metrics (d), and algorithms presented in this lab manual are designed exclusively for use by licensed legal professionals and qualified academic scholars.

- Unauthorized Practice of Law (UPL): Cross-jurisdictional legal comparison carries inherent UPL Pursuant to standards such as ABA Model Rule 5.5 and CCBE Code of Conduct, Art. 5.2, competent verification of foreign law often requires consultation with locally licensed or dual-qualified counsel. This tool does not authorize practice in unadmitted jurisdictions.

- Duty of Independent Verification: In accordance with prevailing professional standards (e.g., ABA Formal 512; EU AI Act, Art. 14), all computational and AI-assisted outputs generated through this methodology must be independently verified by a qualified human attorney for doctrinal integrity and accuracy. The Human-in-the-Loop (HITL) assumes professional and intellectual liability for the accuracy of the final comparison.

- Not Legal Advice: The metrics and classifications generated by this framework constitute academic and empirical legal analysis. They do not constitute individualized legal advice, and no attorney-client relationship is formed through their publication or use.

1.0 Executive Summary: Standardized Comparative Metric of Legal Distance over Space and Time

Comparative.law Lab Manual: Version 4.0 (2026)

What is Computational Comparative Law?

Computational comparative law is the application of quantitative and empirical methods, Artificial Intelligence (AI), and Natural Language Processing (NLP) to analyze the similarities, differences, and the evolution of legal systems. It utilizes “Computational Jurimetrics” and algorithmic scaling to identify these relationships through quantifiable metrics (the d-score).

By converting abstract doctrinal analysis into quantifiable, structured, computable data, it enables the measurement of legal distance across the spatial dimension (different jurisdictions) and the temporal dimension (legal history), scaling traditional scholarship beyond manual human processing capacity.

- The Computational Equivalence Methodology: This lab manual presents a quantifiable, structured, computable, and falsifiable methodology for measuring the “legal distance” (d) between comparable legal terms, rules, institutions, or concepts across the spatial dimension (different jurisdictions) and the temporal dimension (legal history). By operationalizing the functionalist method of Zweigert and Kötz into a computable taxonomy, and incorporating the multidimensional perspective of Roscoe Pound’s ‘Space and Time’ analysis, this framework transitions comparative law from manual qualitative observation to empirical calibration. As the computational extension of classical comparative law, the d– score methodology provides the necessary ‘ground truth’ for large-scale digital analysis in the age of Artificial Intelligence. This structured framework is specifically designed to satisfy the mandatory ethical and legal requirements for Human-in-the-Loop (HITL) oversight and independent verification as defined by ABA Formal Op. 512, Article 14 of the EU AI Act, ABA Model Rule 1.1 (Comment 8), and the CCBE Code of Conduct, 5.2. By providing a falsifiable ‘ground truth’, the methodology ensures that practitioners and legal scholars maintain doctrinal integrity and satisfy their duty of technological competence when working with Artificial Intelligence in cross-jurisdictional (spatial) and intra- jurisdictional (temporal) environments.

- Standardized Comparative Metric (d): This framework establishes Legal Distance (d) as the invariant unit for quantifying jurisdictional convergence across space and time. It functions as a calibrated, 31-point numerical index (0.0 to 3.0) used to quantify the precise position of a legal concept on the Equivalence Spectrum. By transitioning comparative law from manual qualitative observation to empirical calibration, this metric provides the necessary “ground truth” for

- Classical-Computational Hybrid Methodology: The Classical-Computational Hybrid Methodology is a hybrid framework that blends the qualitative, interpretative power of classical comparative law with the quantitative scale and precision of modern computational metrics. This framework does not advocate for the replacement of classical legal scholarship with automated systems. Instead, it proposes a hybrid methodology expressed by the logic: A (Classical) + B (Computational) = C (The Hybrid Outcome). By blending the deep, qualitative interpretative power of classical comparative law (A) with the scale and precision of computational metrics (B), the methodology achieves an optimum outcome (C): it preserves the essential ‘spirit of the law’ found in traditional narratives while satisfying the rigorous, auditable requirements of the digital age.

The Classical-Computational Methodological Equation: A + B = C

A (Classical) + B (Computational) = C (The Hybrid Outcome).

| Phase of the Workflow | Classical Foundation (The "Logic") | Computational Scale (The "Engine") | Hybrid Outcome (The "Standard") |

|---|---|---|---|

| 1. Categorization | Functionalist Inquiry: Identifies the "praesumptio similitudinis" (presumption of similarity). | Algorithmic Filtering: Ingests massive datasets to isolate functionally equivalent outcomes. | Verified Scope: A structurally sound dataset ready for calibration. |

| 2. Calibration | Qualitative Nuance: Provides the "spirit of the law" and historical context. | Metric Calculation (d): Assigns a precise numerical value to jurisdictional distance. | Calibrated Position: A precise, data- backed metric informed by expert nuance. |

| 3. Validation | Scholarly Authentication: Final audit for doctrinal integrity and HITL oversight. | Audit Trail Generation: Creates the computable record for regulatory compliance. | Regulatory Fit: A "Gold Standard" report that satisfies Art. 14 EU AI Act and ABA Formal Op. 512. |

- Computational Equivalence Engine (v1.0): To facilitate large-scale empirical research, the framework includes an official technical implementation—a Python-based computational engine. This tool automates the three-step Algorithmic Filter, allowing researchers to calculate precise Legal Distance scores (d) and Convergence Vectors (Vlegal) across digital datasets.

- Bayesian Priors & Falsifiability: To ensure scientific rigor in data-void environments, the methodology utilizes expert elicitation to establish falsifiable Bayesian Priors. By establishing a predictive baseline through professional consensus, the framework allows for quantitative comparison that remains strictly empirical and subject to falsification as new case law data emerges. Consequently, any scholar who disagrees with a specific Legal Distance score is invited to provide empirical data or documented precedents to recalibrate the metric, transitioning the discourse from a subjective argument over terminology to an objective refinement of the data. This establishes the d-score not as a static opinion, but as a “scientific hypothesis” that remains strictly empirical and subject to revision as data scales.

- Unified Coordinate System: Beyond static cross-jurisdictional comparison, this framework extends its logic to the dimension of time by introducing the Legal Convergence Vector (Vlegal). By applying a single invariant metric (d) to measure both jurisdictional difference (space) and historical evolution (time), this methodology enables disparate legal systems and historical precedents to be precisely calibrated against one another. This establishes a Unified Coordinate System for law—conceptually analogous to a general theory of relativity for legal dynamics—offering a scalable, computable blueprint for the future of the field.

- The Principle of Legal Relativity: This framework operates on the principle of legal relativity, which posits that the identity of a legal term, rule, institution, or concept is defined by its mathematical position relative to other points in a Unified Coordinate System. By treating law not as a static set of rules, but as a dynamic legal reality moving through Space (jurisdictional variation) and Time (historical evolution), the methodology allows for the precise measurement of legal distance over space and time through the d-score and Vlegal vector quantifying the exact rate of jurisdictional convergence or divergence.

- Human-in-the-Loop (HITL) & Scholarly Authentication: To satisfy the duty of independent verification (e.g., ABA Formal Op. 512; EU AI Act, Art. 14), this methodology treats raw algorithmic output as a preliminary diagnostic. All d– scores and Vlegal vectors are subject to a Scholarly Authentication protocol, where a qualified human expert performs a Jurisprudential Audit to ensure doctrinal integrity and assume professional and intellectual liability for the final comparison.

Download Full Methodology PDF on SSRN

Version History

- Version 3.0 (Released 2026): Initial web manual publication.

- Terminology Update: The term “Vector of Legal Convergence Formula” replaces “Velocity Formula” to accurately reflect the vector-based calculation that measures both the magnitude and direction of legal evolution (Vlegal = d(t1) – d(t2)).

2.0 Practical Applications & Use Cases

The Computational Equivalence Methodology is built for versatility, providing a scalable framework for diverse practical applications. By operationalizing the Legal Distance (d) metric and the Legal Convergence Vector (Vlegal), researchers and practitioners can quantify relationships across legal, political, and economic domains that were previously limited to manual qualitative observation. This Classical- Computational Hybrid Methodology (A+B=C) serves as a roadmap for scholars to adapt to the Age of Artificial Intelligence, providing the rigorous logical structure necessary to satisfy the duty of independent verification and govern algorithmic outputs with professional responsibility. The following twelve foundational applications—categorized into Systemic, International, and Domestic/Market domains—illustrate how this framework transitions comparative law into a field of empirical calibration.

Foundational & Systemic Analysis

- Empirical Testing of Doctrinal Hypotheses: Transition from qualitative assessments to empirical testing by using the d-score to establish a falsifiable numerical baseline for This allows for measuring the equivalence of statutory and constitutional rights by testing structural foundations (M, P) against practical results (R, Pr, N).

- Mapping Systemic Convergence and Divergence: Calculate the magnitude of spatial and temporal convergence or divergence between entirely distinct legal systems (e.g., Common Law vs. Civil Law). Use the d-score to quantify jurisdictional separation and map the Vlegal trajectory of entire legal families.

- AI Training & Algorithmic Benchmarking: Establish “ground truth” datasets to train, benchmark, and audit Large Language Models (LLMs). Use the d-score to provide a computable value that mitigates morphological hallucinations (M) and the conflation of “false friends”—cases where (M) and (P) overlap but outcomes diverge.

- Ethical AI Verification & Compliance: Provide a structured, auditable “White Box” framework to satisfy the mandatory duty of independent verification (e.g., EU AI Act, Art. 14). By utilizing the d-score and Vlegal vector, practitioners can demonstrate rigorous Human-in-the-Loop (HITL) oversight and maintain doctrinal integrity.

- Unlocking Interdisciplinary & STEM Funding Opportunities: Bridge the gap between jurisprudence and data science by converting abstract doctrinal analysis into structured, computable data (d-score) required for STEM grants (e.g., NSF, Horizon Europe). This positions legal scholars to compete for funding requiring rigorous empirical metrics and algorithmic benchmarking.

International & Supranational Frameworks (Treaty & EU Analysis)

- Legal Transplants & Supranational Integration: Measure the implementation of legal transplants and the “integration gap” between a mandate and its functional absorption using the d-score. Track whether the domestic (M) and (P) align with the intended (R, Pr, N) of supranational rules such as EU Directives or the UN Convention on Contracts for the International Sale of Goods (CISG).

- Reciprocal Enforcement and Application of International Law: Monitor functional symmetry and quantify the reciprocal application of rights in international treaties (e.g., the Hague Service Convention or the Vienna Convention’s Notice of Consular Rights). Use the d-score to ensure that civil and economic rights are consistently protected across Source (S) and Target (T)

- Computational Lexicography & Translation Precision: Provide a measurable baseline for legal translators and international drafters by using the d-score to distinguish between Functional/Total Equivalents (d=0.0–1.9) for high-fidelity translation, structural “False Friends” (Partial Equivalents, d=2.0–2.9) that require caution, and cases with No Direct Legal Equivalent (d=3.0) where direct translation is prohibited to prevent legal error. This ensures precision and prevents the fabrication of “hallucinated equivalents” when harmonizing multilingual treaties, codes, contracts, or global corporate policies.

Domestic, Market, & Political Dynamics

- Intra-Jurisdictional & Sub-National Comparison: Apply the framework domestically to measure the legal distance and jurisdictional friction between internal regulatory bodies (e.g., state versus state, federal versus state, or municipality versus municipality) by using the d-score to map either the magnitude of relational divergence between peer jurisdictions or the degree of separation from a uniform baseline—such as the Federal Rules of Civil Procedure (FRCP) or Model Acts like the Uniform Commercial Code (UCC) or the Model Penal Code.

- Regulatory Forecasting, Quantitative Legal History & Real-Time Jurisprudential Monitoring: Identifying Vlegal trends allows firms to prepare for structural Feature Shifts (changes in M or P) before they are finalized in formal legislation. Persistent Legal Drift (fluctuations in operational variables R, Pr, N) often serves as a leading indicator of systemic realignment. Apply the temporal dimension (Vlegal) to track historical evolution and systemic ruptures. Use Real- Time Jurisprudential Monitoring to assign a Pre-Change (t1) and Post-Change (t2) d-score to quantify how a single event, such as a Supreme Court ruling, Executive Order, or legislative enactment impacts the trajectory of legal convergence.

- Law Market and Regulatory Competition: Evaluate regulatory competition and jurisdictional arbitrage. Use the d-score and Vlegal to identify “The Delaware Effect” and determine the most efficient legal environment for commercial activities (e.g., IP licensing or digital assets). This is achieved by identifying the Decoupling Gap between formal structural definitions (M, P) and actual operational efficiency—characterized by lower Procedural Friction (Pr), higher Reliability (R), and a lower Iteration Threshold (N).

- Rule of Law, Political Risk & Institutional Stability: Quantify institutional risk by using the d-score to measure shifts in core constitutional and regulatory frameworks. By identifying the Decoupling Gap between the structural foundations—Morphology (M) and Teleology (P)—versus the actual Practical Outcomes (R, Pr, N), this provides an empirical metric to track the Vlegal trajectory of democratic backsliding or restoration. This allows investors and financial institutions to assess the true stability of the “Rule of Law” (e.g., judicial independence or human rights) as a standardized comparative metric.

To operationalize any of the twelve foundational applications listed above, the practitioner must first translate the specific research question into a Computational Equivalence Query (CEQ) as defined in Section 4.1. The CEQ serves as the mandatory logical gateway that converts these diverse legal, political, and financial domains into a structured, computable format.

Note on Methodological Neutrality

This framework is designed as an apolitical, empirical instrument. The Legal Distance metric (d) and the Convergence Vector (Vlegal) measure the magnitude and direction of legal shifts, regardless of political or ideological preference. For example, in a scenario where a government shifts data privacy enforcement or market competition oversight from an independent supervisory authority to a direct executive department, the methodology provides a neutral measurement of the resulting divergence from comparable peer institutions (e.g., the EU’s European Data Protection Board or the European Commission). While scholars and policymakers may disagree on the normative value of such a shift, the computational methodology provides a standardized, objective “ground truth” that both sides can utilize for factual analysis.

3.0 The Equivalence Spectrum

Computational Equivalence is a computable taxonomy and standardized logic used to define the degree of comparability between legal concepts across different jurisdictions. It moves beyond simple binary distinctions to classify the relationship between legal terms using a continuous 31-point scale to quantify Legal Distance (d) across both the spatial (jurisdictional) and temporal (historical) dimensions. This section establishes the foundational definitions for equivalence, details the Four Data Classes required for computability, and introduces the Unified Coordinate System—a mathematical framework used to calibrate disparate legal regimes on a single, computable scale.

3.1 Foundational Definitions

To apply this taxonomy, we must first establish two foundational definitions:

- Legal Equivalence: A legal term, rule, institution, or concept used by legal professionals in one jurisdiction that has a degree of correspondence or comparability to a legal term, rule, institution, or concept in This degree of equivalence is determined by the overlap in their Morphology / Legal Definition (M), Teleology / Legal Purpose (P), and Practical Outcome (R, Pr, N). It is a spectrum, not an absolute, and is categorized into four distinct, computable levels.

- Legal Distance (d): A numerical index representing the precise position of a legal term, rule, institution, or concept on the 31-point Legal Equivalence Spectrum. It quantifies the degree of separation based on the calibrated overlap of Morphology / Legal Definition (M), Teleology / Legal Purpose (P), and Practical Outcome (R, Pr, N), ranging from Total Equivalence (d=0.0) to No Direct Equivalent (d=3.0).

- To ensure the d-score is both computable and auditable, the numerical index is divided into two distinct data layers:

- The Integer (Level Determinant): Indicates the Primary Classification Level (1, 2, 3, or 4). This value is determined by the structural overlap of the Legal Variables: Morphology / Legal Definition (M) and Teleology / Legal Purpose (P).

- Integer 0 = Level 1

- Integer 1 = Level 2

- Integer 2 = Level 3

- Integer 3 = Level 4

- The Integer (Level Determinant): Indicates the Primary Classification Level (1, 2, 3, or 4). This value is determined by the structural overlap of the Legal Variables: Morphology / Legal Definition (M) and Teleology / Legal Purpose (P).

- The Decimal (Confidence Determinant): Indicates the Confidence Interval of the match (.0 to .9), representing the strength or fidelity of the correspondence. This value is determined by the Application Variables: Reliability (R), Procedural Friction (Pr), and the Iteration Threshold (N).

- To ensure the d-score is both computable and auditable, the numerical index is divided into two distinct data layers:

3.2 The Four Data Classes (Levels) & Confidence Intervals

To render legal relationships computable, this framework assigns a Distance Score (d) where the Integer indicates the primary classification and the Decimal indicates the Confidence Interval (the strength or fidelity of the match).

Level 1: Total Legal Equivalent (d=0.0)

- Definition: A perfect, one-to-one match where the term can be substituted across jurisdictions without any changes in Morphology/Legal Definition (M), Teleology/Legal Purpose (P), Practical Outcomes (R, Pr, N), underlying doctrines, or theoretical interpretations.

- Criteria: Substitutability must hold true even in “complex and novel situations”.

- Metric: d=0.0 (Exact Match).

Level 2: Functional Legal Equivalent (d=0.1-1.9)

- Definition: A relationship where terms achieve a high degree of overlap in Teleology/Legal Purpose (P) and substantially similar Practical Outcomes (R, Pr, N) in standard applications, even though their Morphology/Legal Definition

- Confidence Intervals:

- Strong Functional Equivalent (0.1–0.4): High confidence; the outcome is statistically identical (>95% reliability) with negligible procedural

- Standard Functional Equivalent (0.5–1.4): The “Safe” baseline; the outcome is reliable (>90%) and standard for practitioners.

- Weak Functional Equivalent (1.5–1.9): A technical match; achieves the same Practical Outcome but with marginal reliability (85%–90%) or requires significant procedural friction (e.g., complex workarounds or high costs).

Level 3: Partial Legal Equivalent (d=2.0-2.9)

- Definition: A relationship defined by overlap in Morphology/Legal Definition

- Criteria: Often represents “False Friends”—terms that share structural features but diverge in Practical Outcomes.

- Confidence Intervals:

- Strong Partial Equivalent (2.0–2.1): High feature overlap; divergence is limited to specific “edge cases,” but the risk of error remains.

- Standard Partial Equivalent (2.2–2.7): Moderate feature overlap; concepts are morphologically similar but consistently diverge in Practical Outcomes in standard applications.

- Weak Partial Equivalent (2.8–2.9): Low feature overlap; concepts are morphologically distinct and share only a broad teleological goal.

Level 4: No Direct Legal Equivalent (d=3.0)

- Definition: A term unique to its jurisdiction with no counterpart sharing core features—specifically failing to satisfy the conjunctive (combined) overlap of Morphology/Legal Definition (M) and Teleology/Legal Purpose (P).

- Metric: d=3.0 (Maximum Distance / Null Value / Orthogonal).

- The Dual-Protocol for Null Values: To reconcile the risk of AI hallucination with the need for quantitative measurement, this framework applies a dual-protocol to this class:

- Generative Protocol (Substitution): When the system is tasked with text generation or legal drafting (Mode B), this class functions as a Null Value (Ø). This acts as a strict “Stop” command, prohibiting the AI from attempting to substitute or translate the term, thereby preventing the fabrication of “Hallucinated Equivalents”.

- Analytical Protocol (Measurement): When the system is tasked with comparative analytics or vector mapping (Mode A), this class is assigned the integer value of 3 (d=3). This allows the algorithm to calculate the magnitude of “Legal Divergence” and track the trajectory of change over time without compromising the integrity of the generative output.

Summary of Equivalence Thresholds and Variable Mapping

| Equivalence Level | d-Score Range | Variable Mapping |

|---|---|---|

| Total Equivalent | d = 0.0 | Identical: Total symmetry across all variables (Morphology/Legal Definition (M), Teleology/Legal Purpose (P), and Practical Outcomes (R, Pr, N)). |

| Functional Equivalent | d = 0.1 – 1.9 | Functional Substitution: Substantial similarity in Teleology/Legal Purpose (P) and Practical Outcomes (R, Pr, N), despite Morphology/Legal Definition (M) divergence. The decimal indicates the degree of operational efficiency (Confidence Interval). |

| Partial Equivalent | d = 2.0 – 2.9 | Structural Overlap: Overlap in Morphology/Legal Definition (M) and Teleology/Legal Purpose (P). The decimal identifies the density of feature overlap or notable divergence in Practical Outcomes (R, Pr, N) (Confidence Interval). |

| No Direct Equivalent | d = 3.0 | Orthogonal: Total failure of conjunctive overlap between Morphology/Legal Definition (M) and Teleology/Legal Purpose (P). |

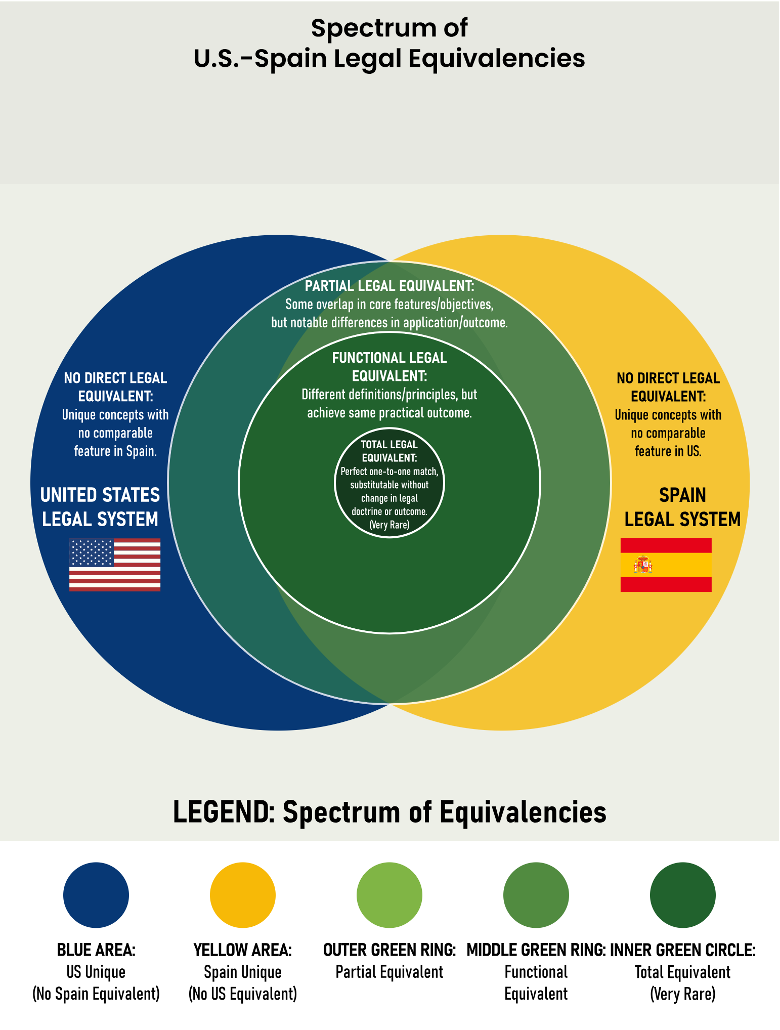

Figure 1: The Legal Equivalence Spectrum

This spectrum diagram visualizes the Legal Equivalence Spectrum as a multi-layered coordinate plane. It illustrates the divergence between concepts that share structural foundations—defined by Morphology/Legal Definition (M) and Teleology/Legal Purpose (P)—versus those that achieve substantially similar Practical Outcomes (R, Pr, N). While the jurisdictions of the United States and Spain are utilized here for illustrative purposes, the Computational Equivalence Methodology and coordinate mapping are universally applicable across any jurisdiction or legal system.

- The Distance Metric (d): The geometric distance from the center corresponds to the computational Legal Distance assigned to the data class.

- Centripetal Convergence: Movement toward the center represents a decrease in distance and an increase in substitutability, with the Inner Green Circle representing the “Zero Distance” zone of a perfect match (d = 0.0).

- Centrifugal Divergence: Movement toward the Outer Blue and Yellow Zones represents maximum distance (d = 0), identifying unique jurisdictional concepts where no comparable features exist.

3.3 The Unified Coordinate System

Definition: The Unified Coordinate System is a mathematical framework that applies a single, invariant metric (d) to measure legal distance across a 2D plane, mapping legal relativity over space (jurisdictional variation) and time (historical evolution). This allows disparate legal regimes and historical precedents to be precisely calibrated against one another on a single, computable scale.

- The Temporal Axis (X): Represents the movement of a legal concept through history, typically measured in years.

- The Distance Axis (Y): Represents the degree of equivalence at any given point in time, quantified by the Legal Distance (d) metric.

- Principle of Legal Relativity: This system posits that the identity of a legal term, rule, institution, or concept is defined by its mathematical position (t, d) relative to other points in the coordinate system.

- The Convergence Vector (Vlegal): Rather than an axis, the vector represents the slope or trajectory between two points (t1, d1) and (t2, d2), quantifying the direction and magnitude of legal evolution.

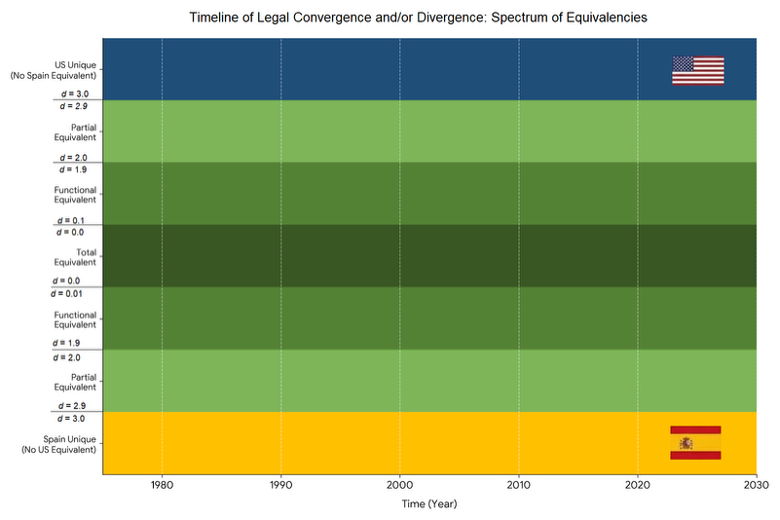

Figure 2: The Unified Coordinate System: Space-Time Dynamics of Legal Convergence

This graph visualizes the Unified Coordinate System, a mathematical framework that maps the precise relationship between disparate legal regimes across a 2D plane. While this illustrative example uses the United States and Spain to represent the outer bounds of divergence, the system is designed to track relationships between any comparable jurisdictions. The horizontal X-axis represents the temporal dimension, tracking the historical movement of a legal concept over time. The vertical Y-axis represents the distance dimension, quantifying the degree of equivalence at any given point in time using the Legal Distance metric (d).

The Y-axis reflects the continuous 31-point Equivalence Spectrum, anchored by a Total Legal Equivalent at the center (d = 0.0) and expanding outward to No Direct Legal Equivalent at the outer edges (d = 3.0). By plotting legal data points on this timeline, researchers can visually and empirically map the Space-Time Dynamics of legal change:

- Convergence: Movement inward toward the center (Green) bands indicates that the legal systems have moved closer in function, purpose, or application.

- Divergence: Movement outward toward the outer “Unique” (Blue/Yellow) bands signifies that the systems have moved further apart, decreasing overlap in purpose or function.

- The Convergence Vector (Vlegal): The slope or trajectory drawn between any two points on this graph represents the (Vlegal) vector, which quantifies the exact direction and magnitude of legal evolution.

3.4 Operational Impact

For practitioners and scholars, these decimal scores function as a “traffic light” system for cross-jurisdictional risk and analytical precision. The following table provides the operational impact and practical meaning for counsel based on each classification:

| Oprational Impact: Distance Index (d) Risk Assessment | |||

|---|---|---|---|

| Risk Level Marker | Distance Index Range (d) | Functional Level | Assessment Notes |

| Dark Green Circle | Distance 0.0 | Total Equivalent | EXACT MATCH. Totally symmetry across all variables (M, P, R, N); Directly substitutable. |

| Light Green Circle | Distance 0.1 – 1.9 | Functional Equivalent | SAFE. Different Morphology (M), but achieves the same teleology (P) and Practical Outcome (R, P, N). |

| Yellow Circle | Distance 2.0 – 2.9 | Partial Equivalent | CAUTION. A False Friend. Shares Morphology (M) and Teleology (P), but produces different Practical Outcomes (R, P, N). |

| Red Circle | Distance 3.0 | No Direct Equivalent | STOP. Failure of conjunctive overlap between Morphology (M) and Teleology (P). Results in legal error. |

Figure 3: Operational Impact: The "Traffic Light" System for Counsel and Scholars

This table outlines the practical implications of the Legal Distance metric (d) classifications. For practitioners and scholars, these decimal scores function as a “traffic light” system for cross-jurisdictional risk and analytical precision. It translates the numerical index into actionable guidance, ranging from a green indicator for an exact match (d = 0.0) or safe functional equivalent (d = 0.1 – 1.9), to a red “STOP” warning (d = 3.0) indicating that attempting to use the concept will result in legal error.

4.0 Algorithm Filter

To classify concepts on the 31-point scale, this framework utilizes a conditional decision tree or “Algorithmic Filter.” This filter represents the “B” (Computational) component of the A+B=C methodology, providing the scale and precision required for large-scale digital analysis. It systematically delegates the classification process by testing the relationship between form (Morphology/Legal Definition (M)), purpose (Teleology/Legal Purpose (P)), and Practical Outcome across three distinct steps. This section defines the Computational Equivalence Query (CEQ)—the mandatory structured input—before detailing the Three-Step Decision Tree used to generate the final Legal Distance score (d).

4.1 The Computational Equivalence Query (CEQ)

The purpose of the Computational Equivalence Methodology is to determine the level of equivalence (Legal Distance (d)) between comparable legal terms, rules, institutions or concepts. To execute this, the legal comparatist must first translate the research question into a structured, computable format known as a Computational Equivalence Query (CEQ).

The CEQ serves as the standardized prompt that initiates the computational equivalence analysis. It acts as the required input for the Algorithmic Filter to map a concept’s precise position on the 31-point Legal Equivalence Spectrum, quantifying the distance from a Total Legal Equivalent (d = 0.0) to No Direct Legal Equivalent (d = 3.0).

A complete CEQ requires three sets of data variables to process the comparison:

- Jurisdictional Variables (Systemic Parameters): The specific Source (S) and Target (T) jurisdictions being compared (e.g., United States vs. Spain).

- Legal Variables (The Step 1 Inputs): The specific legal term, rule, institution, or concept being analyzed. To pass the initial Core Feature Filter, these must be deconstructed into:

- Morphology/Legal Definition (M): The constituent statutory or doctrinal elements of the concept.

- Teleology/Legal Purpose (P): The primary regulatory objective or legal purpose of the concept.

- Application Variables (The Step 2 & 3 Inputs): The contextual data required to test Practical Outcomes and determine Functional Equivalence:

- Standard Application Fact Pattern (F): A neutral set of factual circumstances used as a constant variable to test the legal concepts.

- Reliability Rate (R): The statistical or professional consensus rate at which the two systems produce the same Practical Outcome when applied to the fact pattern.

- Procedural Friction (Pr): The level of institutional or procedural overhead required to achieve the outcome (e.g., Low, Standard, or High).

- Iteration Threshold (N-Value): The quantitative number of procedural cycles required to achieve the targeted regulatory objective. Because this metric measures operational efficiency, the unit of “N” adapts contextually to the specific legal mechanism being tested (e.g., N=1 ruling for immediate precedent vs. N≥2 rulings for reiterated doctrine; or N=1 collective action vs. N=Thousands of individual lawsuits to achieve mass redress).

Note: When analyzing purely static substantive rules (e.g., tax rates, age of majority, or speed limits), this variable defaults to a baseline of N=1 to reflect immediate statutory application.

Together, these variables establish the initial baseline required to map the structural and functional relationship of the concepts within the Spectrum of Legal Equivalences (Figure 1) and the Unified Coordinate System (Figure 2).

4.1.1 Mathematical Definition of the CEQ

To satisfy the requirements for empirical calibration and algorithmic benchmarking, the CEQ is expressed as a multi-variable input function where the Legal Distance (d) is the deterministic output of the Algorithmic Filter (P).

d = 𝒜 ( J{S,T}, L{M,P}, A{F,R,Pr,N} )

The Input Variables: The function ingests three distinct data clusters required to map a concept’s position on the 31-point spectrum:

- Jurisdictional Variables (J): The systemic parameters defining the Source (S) and Target (T) jurisdictions.

- Legal Variables (L): The structural inputs consisting of Morphology/Legal Definition (M) (statutory/doctrinal elements) and Teleology/Legal Purpose (P) (primary regulatory objective/purpose).

- Application Variables (A): The contextual data used to test functional outcomes:

- F: Standard Application Fact Pattern.

- R: Reliability Rate (>85% threshold).

- Pr: Procedural Friction (Low, Standard, High).

- N: Iteration Threshold (Operational efficiency cycles).

Methodological Impact: By framing the query as a mathematical function, the methodology ensures falsifiability. Any challenge to a resulting d-score must identify a specific error in one or more input variables (J, L, A), transitioning legal discourse from subjective debate over terminology to objective data refinement. This structure provides the necessary “Logic Blueprint” for the Computational Equivalence Engine (v1.0) and satisfies the transparency requirements of Article 14 of the EU AI Act regarding human oversight of AI systems.

Integration with the Hybrid Methodology (A + B = C): The CEQ mathematical function serves as the technical engine for the Classical-Computational Hybrid Methodology introduced in Section 1.0.

- (A) The Classical Foundation: The function’s input variables (J, L, A) represent the “Classical” foundation, requiring the qualitative nuance and doctrinal expertise of the human scholar to define the morphology, teleology, and procedural friction.

- (B) The Computational Scale: The Algorithmic Filter (𝒜) provides the “Computational” scale, processing the variables through a standardized, falsifiable logic tree.

- (C) The Hybrid Outcome: The resulting Legal Distance metric (d) is the optimal “Hybrid Outcome”—a highly precise, computable data point that preserves the essential ‘spirit of the law’ for large-scale digital analysis.

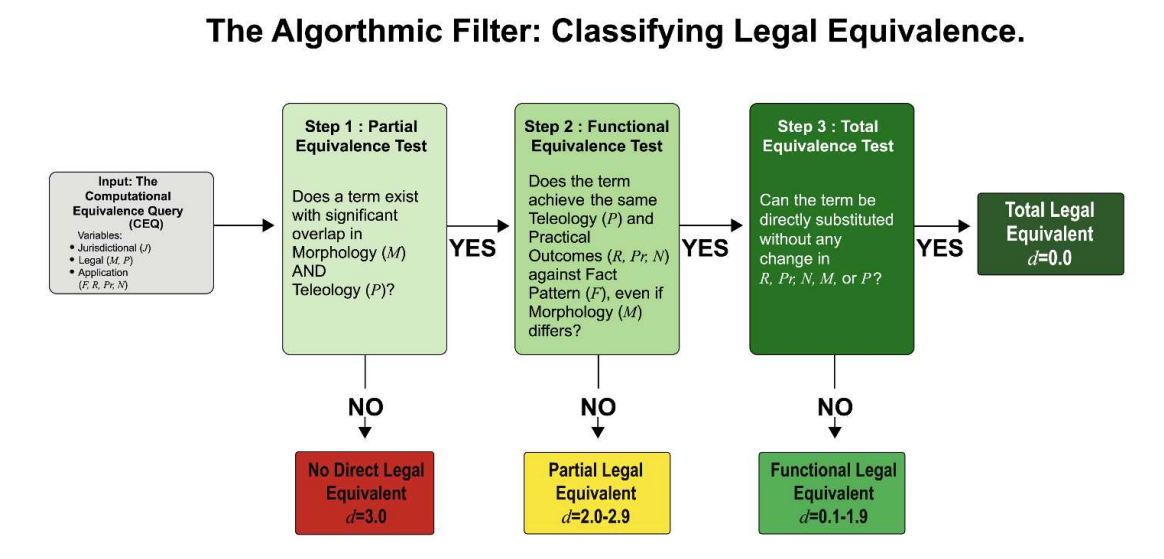

Figure 4: Algorithmic Filter: Classifying Legal Equivalence

This flowchart illustrates the three-step conditional decision tree used to process the Computational Equivalence Query (CEQ) and categorize legal concepts on the continuous 31-point scale (d = 0.0 – 3.0). By systematically testing the relationship between a concept’s structural foundations—Morphology/Legal Definition (M) and Teleology/Legal Purpose (P)—versus its Practical Outcomes (R, Pr, N), the filter delegates the classification process through three empirical gates:

- The Partial Equivalence Test (The Core Feature Filter);

- The Functional Equivalence Test (The Substantially Similar Outcome Filter); and

- The Total Equivalence Test (The Perfect Substitution Filter).

This hierarchical logic ensures that every result is a product of the Computational Equivalence Methodology, providing a rigorous and transparent audit trail for Scholarly Authentication.

4.1.2 Standard Format of the CEQ (The IRAC Issue)

The Mandatory Initiation Step: To satisfy the requirements for Jurisprudential Synthesis and ensure the Audit Trail is doctrinally sound, the practitioner must synthesize the foundational variables (J, L, A) into the Standard Format of the CEQ (The IRAC Issue). This converts the abstract research question into a high-resolution, falsifiable scientific hypothesis.

Standardized IRAC Template: Issue: Whether the Morphology/Legal Definition (M) of [Source (S)] is equivalent to that of [Target (T)] for the Teleology/Legal Purpose (P) of [Purpose], when tested against the Fact Pattern (F): [Facts], and can a Practical Outcome of [Result] be achieved with Reliability (R) of [%], an Iteration Threshold (N) of [Value], and Procedural Friction (Pr) of [Low/Med/High/Value]?

Methodological Impact: Formulating the CEQ in this format ensures the Human-in- the-Loop (HITL) has identified all variables required to navigate the full Algorithmic Filter. It specifically:

- Provides the Morphology/Legal Definition (M) and Teleology/Legal Purpose (P) required to satisfy the Step 1 Conjunctive Gate.

- Defines the Fact Pattern (F) and Reliability (R) necessary to trigger the Step 2 Same Outcome Filter.

- Establishes the Iteration Threshold (N), Procedural Friction (Pr), and Practical Outcome required to calibrate the “Real-World Experience” variables and finalize the precise Legal Distance (d) score.

4.1.3 The HITL Validation Gate (Pre-Audit)

Before finalizing the CEQ, the practitioner must verify that the Issue statement is grounded in objective evidence. An Issue that cannot meet these verification standards is disqualified from the Algorithmic Filter:

- [ ] Morphology/Legal Definition (M): Can you point to a specific statute or case (the Doctrinal Anchor)?

- [ ] Teleology/Legal Purpose (P): Is the “why” of the law documented (the Teleological Intent), or are you guessing?

- [ ] Empirical Support (R): Do you have Path A data or Path B consensus to back up your probability?

- [ ] Real-World Drag (Pr): Has a local counsel or practitioner verified the actual difficulty/cost?

- [ ] Procedural Cycle (N): Is the number of iterations (N) based on actual court timelines?

4.2 The Three-Step Decision Tree

Input: Computational Equivalence Query

Step 1: The Partial Equivalence Test (The Core Feature Filter) Does a legal term exist in the target jurisdiction that shares: 1.) Significant overlap in constituent statutory or doctrinal elements (Morphology / Legal Definition (M)); AND 2.) A shared regulatory objective (Teleology / Legal Purpose (P))?

- NO: Classification is No Direct Legal Equivalent (d=3.0).

- YES (Baseline Partial): Proceed to Step

Step 2: The Functional Equivalence Test (The Substantially Similar Outcome Filter) When tested against a Standard Application Fact Pattern (F) (a neutral set of circumstances isolating Step 1 features), does this term achieve a high degree of overlap in Teleology/Legal Purpose (P) and substantially similar Practical Outcomes (R, Pr, N) in both jurisdictions, even if their Morphology/Legal Definition (M) differs?

- NO: Classification remains Partial Legal Equivalent (d=2.0-9).

- Next Step: Proceed to Section 1 (Protocol A) to calculate the Confidence Interval (Decimal Score).

- YES: (Promote to Functional): Proceed to Step

- NO: Classification remains Partial Legal Equivalent (d=2.0-9).

Step 3: The Total Equivalence Test (The Perfect Substitution Filter) Can the term be “directly substituted” across jurisdictions without any change in Practical Outcome (R, Pr, N), Morphology/Legal Definition (M), Teleology/Legal Purpose (P), underlying doctrine, or theoretical interpretation, even in complex and novel situations?

- NO: Classification is Functional Legal Equivalent (d=0.1-9).

- Next Step: Proceed to Section 2 (Protocol B) to calculate the calibrated Confidence Interval (Decimal Score).

- YES: Classification is Total Legal Equivalent (d=0.0).

- NO: Classification is Functional Legal Equivalent (d=0.1-9).

5.0 The Vlegal Equation: Measuring Magnitude and Direction in the Unified Coordinate System

5.1 The Fundamental Equation

Objective: To measure the magnitude and direction of legal movement over a specified interval—either chronologically (Time) or across jurisdictions (Space). While the d-score provides a static degree of separation, the Vlegal equation defines the Legal Vector representing the shift.

The Equation:

Vlegal = Δd / Δt

Expanded Form:

Vlegal = d(t2) − d(t1)

/

t2 − t1

Where:

- 𝑑(𝑡2): The degree of separation at the end of the interval (Time 2).

- 𝑑(𝑡2): The degree of separation at the start of the interval (Time 1).

- 𝑡1: The initial point in time or the baseline jurisdiction (The “Initial State”).

- 𝑡2: The final point in time or the secondary jurisdiction (The “Final State”).

Standardized Components:

- Magnitude of Change (|V|): The absolute numerical shift in the d-score. This quantifies “how much” the law has moved, regardless of whether it is becoming more similar or more distinct.

- Direction of Change: The orientation of the Legal Vector relative to the Source (S):

- Convergence (-): A decrease in the degree of separation (moving toward d=0.0).

- Divergence (+): An increase in the degree of separation (moving toward d=3.0).

- Interval Δt: The temporal or jurisdictional span over which the magnitude of the Legal Vector is measured.

5.2 Interpretation Key:

The resulting Legal Vector (Vlegal) identifies the trajectory of legal change. For practitioners, the mathematical sign indicates the direction, while the number indicates the intensity.

- Positive Vector (+V) | Divergence: The result is positive, meaning the degree of separation has increased (the systems have moved further apart toward d=3.0). A higher positive number indicates more radical systemic rupture.

- Negative Vector (-V) | Convergence: The result is negative, meaning the degree of separation has decreased (the systems have moved closer toward d=0.0). A higher negative number indicates more rapid harmonization.

- Zero Vector (0) | Stability or Feature Shift: A result of 0 indicates that the overall magnitude of distance on the spectrum has not changed.

- Note: If Vlegal = 0, the researcher must apply the Mixed Dynamics Test

(Section 7.2) to determine if an internal “Feature Shift” has occurred where the distance remains constant but the underlying nature of the equivalence has altered.

5.3 Technical Constraints: Ordinality and Heuristics

While the Vlegal equation enables the aggregation of empirical data, it must be interpreted through the following mathematical constraints to ensure doctrinal integrity:

- Ordinal Data: The assigned numerical values (0-3) represent ordinal data (ranked categories) rather than interval data (fixed physical distances). A “distance” of 2.0 (Partial Equivalence) should not be interpreted as mathematically “double” the divergence of a “distance” of 1.0 (Functional Equivalence).

- Directional Heuristic: Consequently, the Vlegal calculation is a directional It indicates the rank-order magnitude of change, functioning as a relative index for comparative analysis rather than an absolute metric of semantic distance.

- The Analytical d=3.0 Baseline: Under the Analytical Protocol, a value of d=3.0 is utilized as the maximum boundary for This allows the algorithm to track “High Magnitude Divergence” (e.g., V = +2.0) when a system moves from Functional Equivalence (d=1.0) to a unique, non-equivalent state (d=3.0).

6.0 Border Cases & Empirical Resolution Protocols

“Border cases” refer to instances of ambiguity encountered when classifying legal concepts within the Legal Equivalence Spectrum. These protocols are used not only to distinguish between primary classes (e.g., Partial vs. Functional) but also to calculate the precise Confidence Interval (the decimal score) within a specific class. When the Algorithmic Filter encounters such ambiguity, two specific empirical protocols are employed to calculate the final Legal Distance Score (d).

6.1 Protocol A: Core Feature Filter (Resolving No Direct Equivalents & Partial Equivalents)

This protocol is used to resolve ambiguity at the boundary of No Direct Equivalents (d=3.0) and Partial Equivalents, and serves as the mandatory calibration tool to calculate the Confidence Interval (d=2.0–2.9) for all concepts within the Partial Equivalence spectrum—including those that revert to this class after failing the Functional Equivalency Test in Step 2. By measuring the density of overlap across two distinct variables Morphology/Legal Definition (M) and Teleology/Legal Purpose (P), this protocol ensures the final distance score is grounded in verifiable structural and purposeful alignment rather than a ‘hallucinated’ match.

Mapping Targets

To satisfy the Step 1 conjunctive gate and move beyond a d=3.0 classification, the following mandatory variables must be deconstructed:

- Morphology / Legal Definition (M): The constituent statutory requirements or doctrinal components (e.g., “120 km/h speed limit” or “Writing Requirement under the Statute of Frauds”).

- Teleology / Legal Purpose (P): The explicit regulatory objective or social problem the concept is designed to remedy (e.g., “Public Safety” or “Prevention of Fraud”). Mapping this variable is required to identify “False Friend”

Scoring Logic Rules

The final decimal score is determined by the cumulative density of overlap between the identified targets:

- No Direct Equivalent (d=3.0): Fails the Conjunctive Requirement. If there is zero overlap in either a core structural element (M) or a core purposeful element (P), the concepts are strictly orthogonal and cannot be promoted to Level 3.

- Weak Partial Equivalent (d=2.8–2.9): Low Feature Overlap (minimum 1 Morphological and 1 Teleological match). This is the minimum baseline required to prevent a d=3.0 classification.

- Standard Partial Equivalent (d=2.2–2.7): Moderate Feature Overlap. Concepts share significant morphological roots and teleological elements but consistently diverge in Practical Outcomes.

- Strong Partial Equivalent (d=2.0–2.1): High Feature Overlap. Concepts share near-identical morphological structures and teleological alignment, diverging only in specific ‘edge case’ outcomes where the Reliability Rate (R) falls below the 85% threshold.

6.2 Protocol B: Statistical Outcome Analysis (Resolving Partial vs. Functional Equivalence & Confidence Intervals)

This protocol is used to resolve border cases between Partial and Functional Equivalents and to calculate the Confidence Interval for all Functional Equivalents (d=0.1–1.9) by quantifying the reliability of the outcome. It transitions the analysis from structural comparison to operational verification.

To determine the functional score, the researcher must first establish a Standard Application Fact Pattern (F) as a constant variable. The outcome is then verified by identifying which of the following three “Data States” the target jurisdiction currently occupies, which dictates the required path:

- State 1: Statistically Sufficient Judicial Branch Data (Path A – Quantitative/Frequentist): A sufficient volume of relevant court cases exists to statistically calculate the frequency of identical No expert elicitation is required.

- State 2: Statistically Insufficient Judicial Branch Data / Small Sample (Path B – Bayesian Priors: Judicial Source): Relevant court cases exist, but the aggregate volume is statistically insufficient for Path A. This includes, but is not limited to: isolated trial court judgments, scattered first-instance rulings establishing judicial trends, a consistent series of regional appellate orders, binding or persuasive appellate decisions (e.g., U.S. Circuit Courts), interlocutory or emergency orders from high courts (e.g., “shadow docket” entries), or a limited sequence of high-court precedents. The Authenticator utilizes Expert Elicitation to establish a verified Bayesian Prior. In this state, the Authenticator may supplement these judicial signposts with Governmental Action & Inaction (State 3 data) to establish a verified Bayesian Prior that reflects the operational reality of the law.

- State 3: Zero or Non-Representative Judicial Branch Data (Path B – Bayesian Priors: Governmental Action & Inaction): This state applies when there is either a total lack of judicial data or when the available data is so sparse that it cannot stand alone. In this judicial data void, the Authenticator is strictly barred from relying on subjective Instead, the expert must establish a verified Bayesian Prior by pivoting to Governmental Action—specifically Executive Branch Action or Legislative Branch Action as outline in Section 6.3.

- The Functional Scope of Governmental Action: For the purposes of this methodology, “Governmental Action” is defined as any measurable output—formal or informal—by Heads of State, Legislators, Civil Servants, or Government Workers.

- Affirmative Acts: Includes implementation and enforcement, ranging from high-level decrees to “street-level” conduct such as a police officer issuing a citation or a clerk processing voter registration. These acts provide the empirical “ground truth” for the Reliability Rate (R) and Procedural Friction (Pr).

- Inaction and Omissions (Material Silence): The failure or refusal to perform a mandatory duty. This methodology treats such omissions as a measurable “action”—functionally equivalent to a “Failure to Act” under the Administrative Procedure Act (5 S.C. § 551(13)), Spain’s silencio administrativo, Germany’s Untätigkeitsklage, or Article 265 TFEU of EU Law. These data points are used to quantify a decrease in Reliability (R) and/or an increase in the Iteration Threshold (N).

The Representative Test (State 2 vs. State 3 Boundary): To qualify for State 2, the available cases must be “representative” of the Standard Application Fact Pattern (F). If the available judicial data is purely anecdotal or addresses tangential elements rather than the core factual issue being tested, the data is deemed “Non-Representative.” In such instances, the system strictly requires the Authenticator to default to State 3 and verify the practical outcome via Extra-Judicial Primary Data (Governmental Action & Inaction) to establish the Bayesian Prior.

Table: Data State Sensor Mapping

| Data State | Primary Data Branch | Target Actors | Operational Logic |

|---|---|---|---|

| State 1: Sufficient | Judicial Branch | Judges, Lawyers, Litigants | Path A: Frequentist Probability |

| State 2: Insufficient | Judicial Branch | Judges, Lawyers, Litigants | Path B: Bayesian Prior (Judicial) [Optional: Governmental Action & Inaction] |

| State 3: Zero or Non- Representative | Executive / Legislative Branches | Heads of State, Legislators, Civil Servants, and Government Workers | Path B: Bayesian Prior (Governmental Action & Inaction) |

Scientific Validity of Path A (The Quantitative Threshold)

To qualify for State 1 (Path A), the dataset cannot merely be an anecdotal collection. The volume of primary court cases or empirical data is deemed “sufficient” only when the sample size is large enough to be mathematically representative of the jurisdiction’s total litigation volume for that Standard Fact Pattern (F). If the total litigation volume for an issue is too small to reliably calculate a Reliability (R) rate—even if the researcher has collected 100% of the available cases—the Legal Distance (d) calculation is invalid. In such cases of a small sample population, the Authenticator is strictly required to default to State 2 (Path B) and submit the available cases to a Scholarly Authenticator for qualitative Jurisprudential Synthesis.

Scientific Validity of Path B

Professional consensus, derived through formal expert elicitation, functions as a falsifiable Bayesian Prior (P0). If future case law reveals a statistically significant rate of Divergent Outcomes (negatively impacting the Reliability (R)), the score is objectively falsified, and the d-score must be recalibrated. This establishes the d-score as a scientific hypothesis subject to revision as data increases.

The Operational Metrics

The final score is calculated by processing three distinct data points measuring “real- world” legal efficiency:

- Reliability Rate (R): The statistical or consensus-based percentage (minimum 85%) at which the two systems produce the same Practical Outcome.

- Procedural Friction (Pr): An assessment of the institutional overhead, costs, or barriers required to achieve the outcome (Low, Standard, or High).

- Iteration Threshold (N): The number of procedural cycles (e.g., N=1 for immediate bindingness N≥2 for reiterated doctrine) required to trigger the outcome.

Friction Calibration: The Role of the Iteration Threshold (N-Value)

The N-Value serves as the primary empirical indicator of Procedural Friction (Pr):

- Low Friction: Mechanisms achieving immediate binding application (N=1) typically exhibit Low Friction.

- Standard or High Friction: Mechanisms requiring a latency period or cumulative reiteration (N≥2) inherently introduce Standard or High Friction due to the required institutional overhead.

Scoring Logic Rules: The “Drag Coefficient” Matrix

Reliability (R) sets the maximum possible tier (the ceiling), while Procedural Friction (Pr) acts as a “drag” that lowers the confidence interval.

1. Ceiling: Reliability > 95%

- Low Friction (Typically N=1): Score as Functional Strong (d = 1–0.4). The outcome is effectively identical with negligible procedural barriers.

- Standard Friction (Typically N≥2): Score as Functional Standard (d = 0.5– 0.9). The outcome is highly reliable, but latency or procedural hurdles downgrade the practical equivalence.

- High Friction: Score as Functional Weak (d = 1.5–1.9). Severe procedural friction (e.g., parallel litigation, high costs, or excessive latency) erodes the value of the high statistical reliability.

2. Ceiling: Reliability > 90% to 95%

- Low or Standard Friction: Score as Functional Standard (d = 0–1.4). The outcome is reliable and standard for practitioners.

- High Friction: Score as Functional Weak (d = 5–1.9). Complex procedural workarounds drag the score down to the caution zone.

3. Ceiling: Reliability 85% to 90%

- Any Friction Level: Score as Functional Weak (d = 1.5–1.9). Marginal statistical reliability limits the classification to a weak equivalent, regardless of procedural ease.

4. Reliability < 85%

- Failure: The relationship fails the Functional Revert to Partial Equivalent (d = 2.0–2.9) and score using Protocol A.

6.3 Protocol C: Evidentiary Standards for Professional Consensus Verification

To satisfy the Professional Consensus Verification (Path B) and resolve “data voids,” the researcher must perform a formal Scholarly Authentication. This protocol ensures that the values derived through expert elicitation—specifically the estimates for structural alignment (M, P) and operational reliability (R, Pr, N)—are anchored in verifiable evidence and subjected to a rigorous Jurisprudential Audit.

The Jurisprudential Audit (The Three Pillars)

The Authenticator(s) must verify the variables against three mandatory pillars of academic and professional integrity:

- Doctrinal Integrity: A manual verification that the variables derived via expert elicitation—encompassing the core Morphology / Legal Definition (M) and Teleology / Legal Purpose (P), alongside the operational variables (R, Pr, N)— are grounded in current statutes, court rules, court cases, and Governmental Action.This ensures the d-score accurately reflects both the written law and the actual procedural requirements enforced by the courts and relevant executive/administrative authorities.

- Jurisprudential Synthesis: A qualitative refinement to account for the “spirit of the law” and nuanced socio-legal The Authenticator(s) ensures that the Reliability Rate (R) Procedural Friction (Pr) accurately reflect real-world “drag” and systemic variables that a purely mechanical or algorithmic analysis might overlook. This synthesis bridges the gap between the de jure requirements found in Pillar 1 and the de facto reality of the Living Law.

- Ethical Accountability (The HITL Seal): Pursuant to prevailing professional and academic standards (e.g., ABA Formal Op. 512; EU AI Act, 14), the researcher formally adopts the assigned variables (M, P, R, Pr, N) and the resulting Distance Score (d) as their own Formal Jurisprudential Opinion. By assuming intellectual and professional liability for the doctrinal integrity of the comparison, the researcher satisfies the mandatory Human-in-the-Loop (HITL) oversight required for high-risk legal engineering. This independent verification ensures the output is a formal work product—not an unauthenticated machine result—and acknowledges that cross-jurisdictional comparison requires the competent verification of both domestic and foreign law. Such verification must be performed by a Qualified Professional (e.g., a licensed attorney, or a subject- matter expert with advanced legal training and law degrees in the relevant jurisdictions) to fulfill the duty of competency and avoid the unauthorized practice of law in unadmitted jurisdictions.

Path B Verification Protocol: Data State Verification

If the Authenticator is relying on Expert Elicitation (Path B) due to an absence of statistically sufficient judicial branch data, they must execute this Mandatory Verification Protocol to verify the data state before finalizing the Reliability (R) score.

- The Fail-Safe Rule: To maintain the scientific integrity of the index, a d-score may only graduate to a Functional Equivalence classification (d < 0) if it clears the mandatory verification protocol. Failure to clear this protocol results in a Functional Ceiling, restricting the variable to the Partial Equivalence range (d = 2.0 – 2.9).

Mandatory Verification Protocol

- Condition 1 (Data Path Justification): The researcher must first verify that the jurisdiction lacks the statistically sufficient judicial branch data required for Path A (Frequentist analysis) or that the available judicial data is non-representative of the Standard Fact Pattern (F).

- Condition 2 (Bayesian Anchoring): The professional consensus must be anchored in Doctrinal Signposts and/or Extra-Judicial Primary Data to prove the functional values (R, Pr, N) are grounded in a verified practical outcome rather than subjective theory.

- Condition 3 (Negative Proof Rejection / The “Inertia” Check): For State 3 (Zero Judicial Data or Non-Representative Judicial Data), the researcher is strictly barred from assuming that a lack of court cases proves Reliability (R). They must affirmatively acknowledge that the absence of litigation may be caused by Procedural Friction (Pr), governmental barriers, and/or systemic silence rather than Reliability (R).

- Condition 4 (Extra-Judicial Primary Data Verification): Due to the absence or non-representative nature of judicial data (State 3), the researcher must verify the pivot to the Executive or Legislative branches. This requires citing Extra- Judicial Primary Data—specifically Governmental Performance Metrics (actions by civil servants or government workers) or Evidence of Governmental Inaction (Omission of a Legal Duty)—to affirmatively prove the law’s operational reality.

The Constraint against Unfalsifiable Negative Proofs

When relying on Expert Elicitation (Path B) to resolve zero or non-representative judicial branch data (State 3), the Authenticator may not default to a presumption of perfect systemic compliance. Claims that a lack of court cases equates to high reliability must be affirmatively substantiated by Direct Governmental Action Evidence from the Executive or Legislative branches. This includes verified regulatory reporting and Institutional Performance Metrics—such as the statistical frequency of Formal Governmental Acts (Governmental Action), Institutional Interaction Frequencies, or Functional Realization Metrics.

The Rule of Governmental Inaction (Material Omission)

Where a legal mandate requires a proactive institutional response (e.g., emergency service dispatch, environmental inspection, or permit processing), the measured absence of that response (Material Omission) shall be treated as Direct Evidence of low Reliability (R), high Procedural Friction (Pr), and/or an increased Iteration Threshold (N).

Crucially, this definition incorporates the concept of a “Failure to Act” (as defined under the Administrative Procedure Act (APA) in 5 U.S.C. § 551(13), Spain’s silencio administrativo, Germany’s Untätigkeitsklage, or Article 265 TFEU of EU Law), meaning that governmental nonfeasance—the omission or refusal to take a required action by Heads of State, Legislators, Civil Servants, or Government Workers—is itself considered a measurable “action” for the purpose of empirical calibration. High Non- Response Frequencies or low Fulfillment-to-Trigger Ratios constitute empirical data of a Functional Deviation, effectively lowering the reliability (R) score or increasing the Procedural Friction (Pr) and/or Iteration Threshold (N) despite the absence of judicial litigation.

Empirical Channels for Verification

The Authenticator must ground all Path B consensus in a synthesis of the following Doctrinal Signposts and/or Extra-Judicial Primary Data:

- Statutes, Administrative Regulations, and Court Rules (Primary Doctrinal Signposts / Formal Procedural Anchors): Citations to primary black-letter law—encompassing statutes, administrative regulations, and official court rules— that establish the Morphology (M) and Teleology (P) of the expected outcome. These anchors explicitly define the Iteration Threshold (N) or create structural Procedural Friction (Pr). In Path B, these serve as the baseline for the Bayesian Prior, representing the jurisdiction’s formal commitment to the legal mandate.

- Selected Case Law (Judicial “Doctrinal Signposts”): Citations to specific judicial outputs across any level of the judiciary that serve as the definitive anchors for establishing the Bayesian Prior. This includes, but is not limited to: isolated trial court judgments, scattered first-instance rulings establishing judicial trends, a consistent series of regional appellate orders, binding or persuasive appellate decisions (e.g., S. Circuit Courts), interlocutory or emergency orders from high courts (e.g., “shadow docket” entries), or a limited sequence of high- court precedents. These anchors provide the evidentiary basis for expert elicitation regarding Reliability (R), Friction (Pr), and/or Iteration (N).

- Treatises, Restatements, and Legal Scholarship (Scholarly “Doctrinal Signposts”): Citations to standard textbooks, peer-reviewed journals, or expert treatises where the Reliability Rate (R), the core Morphology/Legal Definition (M), or Teleology/Legal Purpose (P) is described as “settled” or “black-letter law”.

- Institutional Standards and Local Legal Culture (Institutional “Doctrinal Signposts”): Reference to official Bar Association standards, the institutional duties of legal practitioners (e.g., “officers of the court” or collaborators with justice), and de facto judicial practices. This includes regional unwritten rules or judge-specific behaviors that define the “Living Law” and determine the real- world Reliability (R) and Procedural Friction (Pr) of a legal outcome, regardless of the written statute.

- Governmental Action & Inaction (Extra-Judicial Primary Data): Citations to non-judicial empirical data that affirmatively demonstrate whether the Practical Outcome (R, Pr, N) is achieved—or fails—through Governmental Action within the Executive and Legislative Branches:

- Governmental Performance Metrics: The statistical frequency of Governmental Action (formal or informal). This includes “street-level” implementation by civil servants and government workers, such as the frequency of police citations/tickets issued, the successful processing of voter registrations, or the approval rates of mandatory permits.

- Governmental Inaction, Failure to Act, and Omission of a Legal Duty: The affirmative verification that a mandatory institutional duty was ignored by a governmental body or official despite the occurrence of a legally triggering event.

- Material Omission as Data: This converts Governmental Silence or nonfeasance—the omission or refusal to take a required action—into a quantitative data point of systemic non-performance by measuring the Response-to-Trigger Ratio. This is functionally equivalent to a “Failure to Act” under the Administrative Procedure Act (5 S.C. § 551(13)), Spain’s silencio administrativo, Germany’s Untätigkeitsklage, or Article 265 TFEU of EU Law.

- Functional Realization Metrics: High non-response frequencies or low fulfillment-to-trigger ratios (e.g., thousands of filed consumer complaints resulting in zero initiated inspections; 50 emergency calls resulting in 10 dispatches.) This provides empirical data of a Functional deviation, directly lowering the Reliability (R) score and/or increasing the Iteration Threshold (N).

Logic Key:

- State 2 (Bayesian Priors – Judicial Source): Used when court cases exist but are statistically insufficient for Path A. The Prior is established using Doctrinal Signposts (Judicial, Primary, Scholarly, or Institutional). In this state, the Authenticator may supplement these signposts with Governmental Action & Inaction (State 3 data) to reflect the operational reality of the law.

- State 3 (Bayesian Priors – Governmental Source): Used when zero or non-representative case law creates a judicial data void. The Prior is established by pivoting to Governmental Action & Inaction (Executive Branch Action, Legislative Branch Action, Performance Metrics, or Functional Realization).

Path B Validation Gates: Mandatory vs. Optional Requirements

| Condition | State 2: Bayesian Priors (Judicial Source) | State 3: Bayesian Priors (Governmental Source) |

|---|---|---|

| 1. Path A Data Void Acknowledged | Mandatory: Confirm the dataset lacks statistically sufficient court cases. | Mandatory: Confirm the total absence or non-representative nature of a primary judicial dataset. |

| 2. Establishment of the Prior | Mandatory: Established via synthesis of Doctrinal Signposts (Judicial, Primary, Scholarly, or Institutional). Optional: May supplement with Extra- Judicial Primary Data (Governmental Action & Inaction). | Mandatory: Established via synthesis of Extra-Judicial Primary Data (Governmental Action & Inaction). |

| 3. Rejection of Negative Proof | Optional: Best practice to ensure signposts reflect systemic reality. | Mandatory (Lack of Cases ≠ Success): Barred from using silence as affirmative proof. |

| 4. Extra-Judicial Primary Data Verification | Optional: Exempt if Doctrinal Signposts prove operational reality. May supplement with Extra-Judicial Primary | Mandatory: Universal requirement to bridge the data void via Extra-Judicial Primary Data (Governmental Action & Inaction). |

| Data (Governmental Action & Inaction). |

Audit Checklist: Path B Verification

- [ ] Condition 1: Judicial Branch Data Void I have confirmed that a statistically sufficient volume of primary court cases (Path A) is unavailable.

- [ ] Condition 2: Establishment of the Prior. I am utilizing Path B (Bayesian Priors) to synthesize Doctrinal Signposts and/or relevant Extra-Judicial Primary Data (Governmental Action & Inaction).

- [ ] Condition 3: Rejection of the Negative In State 3, I have not based the Reliability (R) score on an assumption that the law is perfectly obeyed. I acknowledge that a lack of court cases may be caused by Procedural Friction (Pr), administrative barriers, or systemic silence, rather than Reliability (R).

- [ ] Condition 4: Extra-Judicial Primary Data (Governmental Action & Inaction) (State 3 Universal Requirement). I have cited Direct Governmental Evidence from the Executive or Legislative branches to affirmatively prove the Practical Outcome (R, Pr, N). This includes:

- Governmental Performance Metrics: Evidence of formal or informal acts by civil servants or government workers (e.g., police citations, voter registrations, permit processing).

- Functional Realization Metrics: Empirical data verifying the law’s reach into the target population (e.g., tax compliance, census data).

- Evidence of Material Omission: A documented “Failure to Act” (per 5 U.S.C. § 551(13), Article 265 TFEU, or functional equivalents) used as a quantitative data point of systemic non-performance.

7.0 Space-Time Dynamics of Legal Convergence: The Unified Coordinate System

Static legal comparison provides a high-fidelity snapshot of a specific moment, but it cannot account for the inherent “latency” or “evolution” of living legal systems. Section 7.0 introduces the Step-by-Step Analysis for Classifying Legal Change, a temporal framework used to plot the movement of legal concepts across the Unified Coordinate System (UCS). By measuring the direction and magnitude of the Legal Convergence Vector (Vlegal) on the Timeline of Legal Convergence, researchers can determine the rate at which a system is moving toward the center or diverging into a decoupled state.

7.1 The Coordinate Space (X and Y Axes)

The Unified Coordinate System maps legal relativity over a 2D coordinate space, where the position of any data point is determined by the audited variables (M, P, R, Pr, N).

- The Temporal Axis (X): Represents the chronological progression of the legal concept, tracking its historical movement over time (e.g., 1980–2030).

- The Distance Axis (Y): Represents the degree of equivalence quantified by the Legal Distance (d) metric. This axis is calibrated by two distinct data layers:

- Level Determinants (Integers 0–3): Establishes the equivalence level based on the structural overlap of Morphology (M) and Teleology (P), as filtered by the operational thresholds of R, Pr, and N.

- Confidence Determinants (Decimals .1–.9): Quantifies the specific degree of variance within a level (Confidence Interval). This is determined by the density of feature overlap (M, P) or the operational variables of Reliability (R), Procedural Friction (Pr), and the Iteration Threshold (N).

7.2 Step-by-Step Analysis for Classifying Legal Change

To classify legal evolution, the researcher performs an Initial Assessment to determine the Pre-Change Equivalence (d(t1)) and the Post-Change Equivalence (d(t2)). The final classification is determined by processing the mathematical result of the Legal Convergence Vector (Vlegal) through four sequential logic gates:

Question 1: Has the change resulted in a clear movement to a higher legal equivalence Level?

- Vector Logic: Is Vlegal < 0? (The distance decreased over the interval t2 – t1).

Classification: Legal Convergence.

- Variable Drivers: Driven by increased overlap in structural variables (M, P) or an improvement in operational variables (R, Pr, N).

- Visual: Inward movement toward the green center bands (d = 0).

Question 2: Has the change resulted in a clear movement to a lower legal equivalence Level?

- Vector Logic: Is Vlegal > 0? (The distance increased over the interval t2 – t1).

- Classification: Legal Divergence.

- Variable Drivers: Caused by a decrease in structural overlap (M, P) or a degradation in operational efficiency (R, Pr, N).

- Visual: Outward movement toward the “Unique” (Blue/Yellow) bands (d = 0).

Question 3: Did the change increase the overlap in one core equivalence feature while simultaneously decreasing the overlap in another?

- Vector Logic: Is Vlegal = 0, but the change increased operational equivalence (e.g., R, Pr, N) while decreasing structural equivalence (e.g., M, P)?

- Classification: Mixed Legal Convergence and Divergence (Feature Shift).

- Visual: Represented by an oscillating or wavy line style along a horizontal path, signifying a change in the nature of the equivalence without a change in the overall Level.

Question 4: Has the change maintained the same legal equivalence level?

- Vector Logic: Is Vlegal = 0 with no internal feature shift

- Classification: Stable Equivalence.

- Variable Drivers: Internal fluctuations in variables (M, P, R, Pr, N) that have a negligible impact on the overall comparative relationship.

- Visual: A flat horizontal path within a single equivalence

7.3 Strategic Implications of Spatiotemporal Mapping

Tracking these movements through the Unified Coordinate System allows for Predictive Engineering:

- Regulatory Forecasting: Identifying Vlegal trends allows firms to prepare for structural Feature Shifts (changes in M or P) before they are finalized in formal legislation. Persistent Legal Drift (fluctuations in operational variables R, Pr, N) often serves as a leading indicator of systemic realignment.

- Decoupling Identification: Gaps between operational shifts (R, Pr, N) and formal definitions (M, P) highlight where the “Living Law” has decoupled from the written statute, revealing systemic risk or opportunities for regulatory arbitrage.

7.4 The No Direct Equivalent Threshold

The No Direct Equivalent Threshold is the mathematical boundary (d = 3.0) represented by the “Unique” (Blue/Yellow) bands. This state is defined by a total failure of the conjunctive overlap between Morphology/Legal Definition (M) and Teleology/Legal Purpose (P), where legal concepts are strictly orthogonal. On the 1980–2030 timeline, a persistent Stable Equivalence (Vlegal = 0) at this level identifies that these structural variables remain fixed in a divergent state. This signifies that no degree of adjustment to operational variables (R, Pr, N) is sufficient to bridge the jurisdictional gap.

8.0 Scholarly Authentication: The Human-in-the-Loop (HITL) Seal

While the computational engine provides the scale for digital analysis, the Legal Distance (d) and Convergence Vector (Vlegal) are categorized as “Raw Algorithmic Output” until they undergo formal Scholarly Authentication. This phase represents the “A” (Classical) component of the A + B = C methodology, providing the necessary human audit to satisfy the duty of technological competence and doctrinal integrity.

8.1 The Jurisprudential Audit (Cross-Reference)

The Authenticator(s)—a qualified legal professional(s) or subject-matter expert(s) with advanced legal training and law degrees in the relevant jurisdictions—must subject all comparative outputs to a Jurisprudential Audit. This audit serves as the mandatory independent verification required by ABA Formal Op. 512 and Article 14 of the EU AI Act.

The verification standards for this audit—consisting of Pillar 1: Doctrinal Integrity, Pillar 2: Jurisprudential Synthesis, and Pillar 3: Ethical Accountability—are detailed in Section 6.3 (Protocol C).

8.1.1 The Principle of Dynamic Falsifiability

To maintain scientific rigor and satisfy the requirement of falsifiability, the d-score must be treated as a dynamic “scientific hypothesis” rather than a static opinion. Under this protocol, both scholarly disagreement and the emergence of New Evidence (E)—such as a shift in Legal Definition (M), Legal Purpose (P), or a Practical Outcome divergence (R, Pr, N)—are transformed into a Virtuous Feedback Loop, where every variable update results in a higher-fidelity calibration of the d-score.

To perform a recalibration, the Authenticator must follow the 5-step loop detailed in Section 8.4 Bayesian Recalibration: Updating the Algorithmic Filter, which treats the original score as the Bayesian Prior (P0) and adjusts it by the new Evidence (E) to reach a new Posterior (Ppost) ‘Ground Truth’.

8.2 Professional Adoption and Professional Liability

The core function of Scholarly Authentication is the formal transition of intellectual property and professional responsibility from raw algorithmic output to a verified work of human authorship. By authenticating the results, the researcher performs the following legal and ethical actions:

- Assumption of Liability: The Authenticator(s) formally adopts the assigned variables (M, P, R, Pr, N) and the resulting d-score as their own Formal Jurisprudential Opinion.

- Intellectual Responsibility: The researcher assumes full intellectual and professional liability for the doctrinal accuracy of the comparison, satisfying the Human-in-the-Loop (HITL) oversight required for high-risk legal engineering.

- Verification of Origin: This process ensures the output is a formal work product of a Qualified Professional rather than an unauthenticated machine result, mitigating the risk of the unauthorized practice of law (UPL).

8.3 Intellectual Property & The Declaration of Authentication

The act of Scholarly Authentication transforms a dataset into an original work of authorship. Through the selection, coordination, and arrangement of legal data points and the authorship of interpretive findings, the Authenticator(s) creates a protected work under 17 U.S.C. § 101 et seq. (Copyright).

To formalize this status, the platform utilizes a Declaration of Scholarly Authentication, which: